Well-written unit tests are one of the most effective tools for ensuring product quality. Unfortunately, not all unit tests are well written, and the ones that are not are often a source of frustration and lost productivity. Here are the most common unit test issues I encountered during my career.

Flaky unit tests

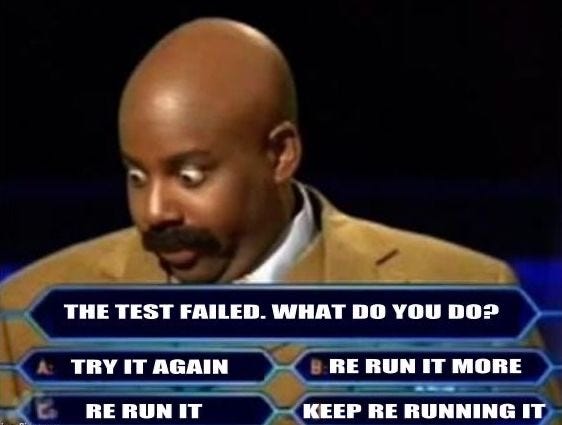

Flaky tests pass most of the time, but not always. They may randomly fail even though no code has changed. The quickest and most common “fix” developers employ is to re-run them. With time, the number of flaky tests grows, and even multiple re-runs are insufficient.

Flaky tests are caused primarily by the following:

- shared state

- dependency on external systems

A shared state is the number one cause of test flakiness. Static variables could be one example. If one test sets a static variable and another passes only if this variable is set, the second test will fail if the order of execution changes.

Debugging flakiness caused by shared state is usually tricky because sharing state is rarely intentional.

Tests that depend on external systems tend to be flaky because the systems they rely on are outside their control. Any deployments, crashes, or throttling will cause test failures. Network, which is inherently unreliable, is yet another contributor. The best fix is to mock external dependencies.

Multithreaded applications deserve special mention. Race conditions in the product code could make tests for these applications flaky, and finding the root cause is often challenging.

Slow tests

Slow tests are a productivity killer. If running tests for a code change takes more than a few seconds, developers will use it as an excuse to find a distraction.

One of the most common reasons tests are slow is their dependency on external systems: network calls and the time to process the requests initiated by tests add up.

But tests that depend on external systems are also flaky, so slowness and flakiness go hand-in-hand.

Again, mocking external dependencies is the best fix to make tests fast and reliable.

If relying on external systems is intentional (e.g., end-to-end testing), it is worth separating end-to-end tests into a dedicated suite executed separately, for instance, as part of the nightly build.

I was once on a team where running all the tests took more than two hours because most of them communicated with a database. These tests were also flaky, so merging more than one Pull Request a day was virtually impossible.

Bugs in unit tests

Tests are there to ensure the quality of the product, but nothing is there to ensure the quality of tests. As a result, tests may fail to do their job due to bugs. Unfortunately, identifying these bugs is not easy. Paying attention can help. For instance, if all tests continue to pass after changing the product code, it usually indicates either bugs in tests or missing test coverage.

Hard to maintain tests

Tying tests and implementation details closely usually causes numerous test failures after even simple product code changes. Keeping tests focused on functionality instead of on the implementation can significantly reduce the number of unnecessary test failures.

Writing “tests” only to hit the code coverage number

Test code written solely to meet code coverage goals is usually low quality. Assertions in such code are often missing because they don’t contribute to the coverage goal but can cause failures. Test coverage reported by tools can make the manager look good, but this test code is useless as it can’t prevent bugs. What’s worse, the high coverage hides areas that do need attention.

This is my list of the top 5 unit test issues. What’s yours?