Until Entity Framework 6.1 was released store functions (i.e. Table Valued Functions and Stored Procedures) could be used in EF only when doing Database First. There were some workarounds which made it possible to invoke store functions in Code First apps but you still could not use TVFs in Linq queries which was one of the biggest limitations. In EF 6.1 the mapping API was made public which (along with some additional tweaks) made it possible to use store functions in your Code First apps. Note, that it does not mean that things will start working automagically once you upgrade to EF6.1. Rather, it means that it is now possible to help EF realize that it actually is capable of handling store functions even when Code First approach is being used. Sounds exciting doesn’t it? So, probably the question you have is:

How do I do that?

To understand how store functions could be enabled for Code First in EF 6.1 let’s take a look first at how they work in the Database First scenario. In Database First you define methods that are driving the execution of store functions in your context class (typically these methods are generated for you when you create a model from the database). You use these methods in your app by calling them directly or, in case of TVFs, in LINQ queries. One thing that is worth mentioning is that these methods need to follow certain conventions otherwise EF won’t be able to use them. Apart from methods defined in your context class store functions must also be specified in the artifacts describing the model – SSDL, CSDL and MSL (think: edmx). At runtime these artifacts are loaded to MetadataWorkspace object which contains all the information about the model.

In Code First the model is being built from the code when the application starts. Types are discovered using reflection and are configured with fluent API in the OnModelCreating method, attributes and/or conventions. The model is then loaded to the MetadataWorkspace (similarly to what happens in the Database First approach) and once this is done both – Code First and Database First operate in the same way. Note that the model becomes read-only after it has been loaded the MetadataWorkspace.

Because Database First and Code First converge at the MetadataWorkspace level enabling discovery of store functions in Code First along with additional model configuration should suffice to add general support for store functions in Code First. Model configuration (and therefore store function discovery) has to happen before the model is loaded to the MetadataWorkspace otherwise the metadata will be sealed and it will be impossible to tweak the model. There are three ways we can configure the model in Code First – configuration attributes, fluent API and conventions. Attributes are not rich enough to configure store functions. Fluent API does not have access to mapping. This leaves conventions. Indeed a custom model convention seems ideal – it gives you access to the model which in EF 6.1 not only contains conceptual and store models but also modifiable mapping information. So, we could create a convention which discovers methods using reflection, then configures store and conceptual models accordingly and defines the mapping. Methods mapped to store functions will have to meet some specific requirements imposed by Entity Framework. The requirements for methods mapped to table valued functions are the following:

- return type must be an

IQueryable<T>whereTis a type for which a corresponding EDM type exists – i.e. is either a primitive type that is supported by EF (for instanceintis fine whileuintwon’t work) or a non-primitive type (enum/complex type/entity type) that has been configured (either implicitly or explicitly) in your model - method parameters must be of scalar (i.e. primitive or enum) types mappable to EF types

- methods must have the

DbFunctionAttributewhose the first argument is the conceptual container name and the second argument is the function name. The container name is typically the name of theDbContextderived class however if you are unsure you can use the following code snippet to get the name:Console.WriteLine( ((IObjectContextAdapter) ctx).ObjectContext.MetadataWorkspace .GetItemCollection(DataSpace.CSpace) .GetItems<EntityContainer>() .Single() .Name); - the name of the method, the value of the

DbFunction.FunctionNameand thequeryStringname passed to theCreateQuerycall must all be the same - in some cases TVF mapping may require additional details – a column name and/or the name of the database schema. You can specify them using the

DbFunctionDetailsAttribute. The column name is required if the method is mapped to a TVF that returns a collection of primitive values. This is needed because EF requires providing the name of the column containing the values and there is no way of inferring this information from the code and therefore it has to be provided externally by setting theResultColumnNameproperty of theDbFunctionDetailsattribute to the name of the column returned by the function. The database schema name needs to be specified if the schema of the TVF being mapped is different from the default schema name passed to the convention constructor and can be done by setting theDatabaseSchemaproperty of theDbFunctionDetailsAttribute.

The requirements for methods mapped to stored procedures are less demanding and are the following:

- the return type has to be

ObjectResult<T>whereT, similarly to TVFs, is a type that can be mapped to an EDM type - you can also specify the name of the database schema if it is different from the default name by setting the

DatabaseSchemaproperty of theDbFunctionDetailsAttribute. (Because of how the result mapping works for stored procedures setting theResultColumnNameproperty has no effect)

The above requirements were mostly about method signatures but the bodies of the methods are important too. For TVFs you create a query using the ObjectContext.CreateQuery method while stored procedures just use ObjectContext.ExecuteFunction method. Below you can find examples for both TVFs and stored procedures (also notice how parameters passed to store functions are created). In addition the methods need to be members of the DbContext derived type which itself is the generic argument of the convention.

Currently only the simplest result mapping where names of the columns returned from the database match the names of the names of the properties of the target type (except for mapping to scalar results) is supported. This is actually a limitation in the EF Code First where more complicated mappings would currently be ignored in most cases even though they are valid from the MSL perspective. There is a chance of having more complicated mappings enabled in EF 6.1.1 if appropriate changes are checked in by the time EF 6.1.1 ships. From here there should be just one step to enabling stored procedures returning multiple resultsets in Code First.

Now you probably are a bit tired of all this EF mumbo-jumbo and would like to see

The Code

To see the custom convention in action create a new (Console Application) project. Once the project has been created add the EntityFramework.CodeFirstStoreFunctions NuGet package. You can add it either from the Package Manager Console by executing

Install-Package EntityFramework.CodeFirstStoreFunctions -Pre

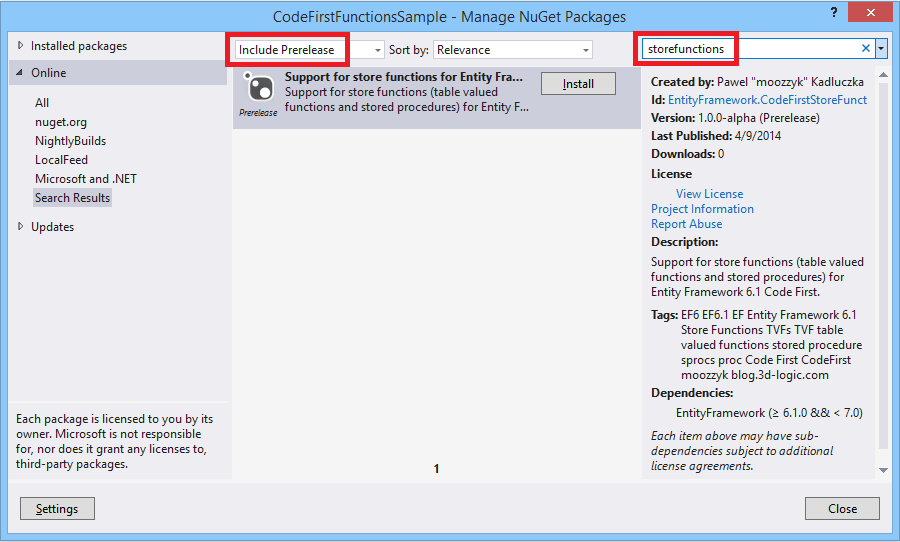

command or using the UI – right click the References in the solution explorer and select “Manage NuGet Packages”, then when the dialog opens make sure that the “Include Prerelease” option in the dropdown at the top of the dialog is selected and use “storefunctions” in the search box to find the package. Finally click the “Install” button to install the package.

Installing EntityFramework.CodeFirstStoreFunctions from UI

After the package has been installed copy and paste the code snippet from below to your project. This code demonstrates how to enable store functions in Code First.

public class Customer

{

public int Id { get; set; }

public string Name { get; set; }

public string ZipCode { get; set; }

}

public class MyContext : DbContext

{

static MyContext()

{

Database.SetInitializer(new MyContextInitializer());

}

public DbSet<Customer> Customers { get; set; }

protected override void OnModelCreating(DbModelBuilder modelBuilder)

{

modelBuilder.Conventions.Add(new FunctionsConvention<MyContext>("dbo"));

}

[DbFunction("MyContext", "CustomersByZipCode")]

public IQueryable<Customer> CustomersByZipCode(string zipCode)

{

var zipCodeParameter = zipCode != null ?

new ObjectParameter("ZipCode", zipCode) :

new ObjectParameter("ZipCode", typeof(string));

return ((IObjectContextAdapter)this).ObjectContext

.CreateQuery<Customer>(

string.Format("[{0}].{1}", GetType().Name,

"[CustomersByZipCode](@ZipCode)"), zipCodeParameter);

}

public ObjectResult<Customer> GetCustomersByName(string name)

{

var nameParameter = name != null ?

new ObjectParameter("Name", name) :

new ObjectParameter("Name", typeof(string));

return ((IObjectContextAdapter)this).ObjectContext.

ExecuteFunction<Customer>("GetCustomersByName", nameParameter);

}

}

public class MyContextInitializer : DropCreateDatabaseAlways<MyContext>

{

public override void InitializeDatabase(MyContext context)

{

base.InitializeDatabase(context);

context.Database.ExecuteSqlCommand(

"CREATE PROCEDURE [dbo].[GetCustomersByName] @Name nvarchar(max) AS " +

"SELECT [Id], [Name], [ZipCode] " +

"FROM [dbo].[Customers] " +

"WHERE [Name] LIKE (@Name)");

context.Database.ExecuteSqlCommand(

"CREATE FUNCTION [dbo].[CustomersByZipCode](@ZipCode nchar(5)) " +

"RETURNS TABLE " +

"RETURN " +

"SELECT [Id], [Name], [ZipCode] " +

"FROM [dbo].[Customers] " +

"WHERE [ZipCode] = @ZipCode");

}

protected override void Seed(MyContext context)

{

context.Customers.Add(new Customer {Name = "John", ZipCode = "98052"});

context.Customers.Add(new Customer { Name = "Natasha", ZipCode = "98210" });

context.Customers.Add(new Customer { Name = "Lin", ZipCode = "98052" });

context.Customers.Add(new Customer { Name = "Josh", ZipCode = "90210" });

context.Customers.Add(new Customer { Name = "Maria", ZipCode = "98074" });

context.SaveChanges();

}

}

class Program

{

static void Main()

{

using (var ctx = new MyContext())

{

const string zipCode = "98052";

var q = ctx.CustomersByZipCode(zipCode)

.Where(c => c.Name.Length > 3);

//Console.WriteLine(((ObjectQuery)q).ToTraceString());

Console.WriteLine("TVF: CustomersByZipCode('{0}')", zipCode);

foreach (var customer in q)

{

Console.WriteLine("Id: {0}, Name: {1}, ZipCode: {2}",

customer.Id, customer.Name, customer.ZipCode);

}

const string name = "Jo%";

Console.WriteLine("\nStored procedure: GetCustomersByName '{0}'", name);

foreach (var customer in ctx.GetCustomersByName(name))

{

Console.WriteLine("Id: {0}, Name: {1}, ZipCode: {2}",

customer.Id, customer.Name, customer.ZipCode);

}

}

}

}

In the code above I use a custom initializer to initialize the database and create a table-valued function and a stored procedure (in a real application you would probably use Code First Migrations for this). The initializer also populates the database with some data in the Seed method. The MyContext class is a class derived from the DbContext class and contains two methods that are mapped to store functions created in the initializer. The context class contains also the OnModelCreating method where we register the convention which will do all the hard work related to setting up our store functions. The Main method contains code that invokes store functions created when initializing the database. First, we use the TVF. Note, that we compose the query on the function which means that the whole query will be translated to SQL and executed on the database side. If you would like to see this you can uncomment the line which prints the SQL query in the above snippet and you will see the exact query that will be sent to the database:

SELECT

[Extent1].[Id] AS [Id],

[Extent1].[Name] AS [Name],

[Extent1].[ZipCode] AS [ZipCode]

FROM [dbo].[CustomersByZipCode](@ZipCode) AS [Extent1]

WHERE ( CAST(LEN([Extent1].[Name]) AS int)) > 3

(Back to the code) Next we execute the query and display results. Once we are done with the TVF we invoke the stored procedure. This is just an invocation because you cannot build queries on top of results returned by stored procedures. If you need any query-like (or other) logic it must be inside the stored procedure itself and otherwise you end up having a Linq query that is being run against materialized results. That’s pretty much the whole app. Just in case I am pasting the output the app produces below:

TVF: CustomersByZipCode('98052')

Id: 1, Name: John, ZipCode: 98052

Stored procedure: GetCustomersByName 'Jo%'

Id: 1, Name: John, ZipCode: 98052

Id: 4, Name: Josh, ZipCode: 90210

Press any key to continue . . .

Note that in both examples the return types are based on entity types. As I hinted above you can also use complex and scalar types for your results. Take a look at the End-to-End tests in the project itself – all scenarios are tested there.

That’s about what’s in alpha, so you may ask:

what’s next?

If you look at the code there are a few TODOs in the code. One of the most important is the support for nullable parameters. I am also thinking of removing the limitation where the method name in your DbContext derived class must ultimately match the name of the TVF in the database. If the workitem 2192 is resolved for the next version of EF I will be able to add support for non-default mapping. In addition I think it is very close from workitem 2192 to supporting stored procedures returning multiple resultsets. Not sure how useful it would be but it would be cool to see support for this feature which currently is kind of a dead feature because it is supported neither by CodeFirst nor by EF tooling.

Anything else?

The project is open source and is hosted on codeplex. You can get the sources from here. Try it and let me know what you think.